AtCoder Grand Contest 038

Updated:

Source codes

Solutions

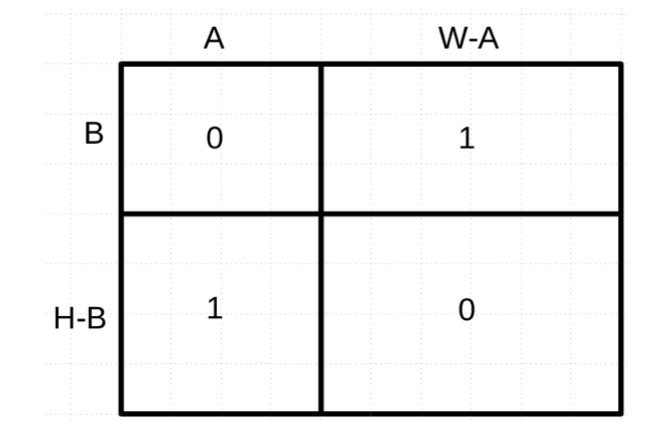

A - 01 Matrix

Solution

For instance, the following picture gives a solution (from the official editorial).

B - Sorting a Segment

Let $I _ i = [i, i + K)$. We also use $I _ i$ to denote the manipulation for it.

Observation

Consider two manipulations $I _ i$ and $I _ j$. When do they give the same result? If $I _ i \cap I _ j = \emptyset$, it will mean the both ranges $I _ i$ and $I _ j$ have already been sorted and the resulting sequence is the same as the original sequence.

If $I _ i \cap I _ j \neq \emptyset$, it will mean $I _ i \setminus I _ j$ and $I _ j \setminus I _ i$ have both already been sorted. Therefore for each $i \leq k \leq j$, $I _ k$ gives the same result. Thus we consider the relation only between $I _ i$ and $I _ {i + 1}$.

Solution

So, our strategy is composed of two parts. We use an union-find tree to keep the states. We use $I _ {N - K + 1}$ to denote the original state. We merge $i$ and $j$ if and only if $I _ i$ and $I _ j$ gives the same result. The answer is the number of connected components, being careful of $I _ {N - K + 1}$.

Let’s see the details with implements to avoid tiny mistakes.

Connect $I _ i$ and original state

As we argued just now, if $I _ i$ are already sorted, it will give the original result. This is equivalent as follows of course. \[ a _ i < a _ {i + 1} < \dots < a _ {i + K - 2} < a _ {i + K - 1} \tag{B.1} \]

We define

$ascend[i] = 0$ if $a _ i < a _ {i + 1}$, $1$ if $a _ i > a _ {i + 1}$,

$ascend\_sum[i] = \displaystyle \sum _ {j = 0} ^ {i - 1} ascend[j]$.

(B.1) is equivalent to \[ 0 = \sum _ {j = i} ^ {i + K - 2} ascend[j] = ascend\_sum[i + K - 1] - ascend\_sum[i]. \]

Connect $I _ i$ and $I _ {i + 1}$

$I _ i$ and $I _ {i + 1}$ give the same result if and only if $P[i]$ is the minimum of $I _ i \cup I _ {i + 1}$ and $P[i + K]$ is the maximum of $I _ i \cup I _ {i + 1}$. This is a very typical RMQ. But we work on it by set<int> S;

We consider the minimum part. Let $Q = P ^ {-1}$. For $k = 0, \dots, N - 1$, let $e = Q[k]$. We execute S.insert(e);. If $0 \leq e \leq N - K - 1$, we have to determine whether $e$ is minimum on $I _ {e} \cup I _ {e + 1}$ or not. We use auto it{S.find()};, auto it1{it};, ++it1;. If it1 == S.end() or *it1 - e > K, it will mean $e$ is the minimum element of $I _ {e} \cup I _ {e + 1}$.

We consider the maximum part. We use another S. Let $Q = P ^ {-1}$ and reversed. For $k = 0, \dots, N - 1$, let $e = Q[k]$. We execute S.insert(e);. If $K \leq e \leq N - 1$, we have to determine whether $e$ is maximum on $I _ {e - K} \cup I _ {e - K + 1}$ or not. We use auto it{S.find()};, auto it1{it};, --it1;. If it == S.begin() or e - *it1 > K, it will mean $e$ is the minimum element of $I _ {e - K} \cup I _ {e - K + 1}$.

C - LCMs

The idea of solution

For $x, y \in \mathbb{N} _ +$, we have \[ \mathrm{lcm}(x, y) = \frac{x y}{\mathrm{gcd}(x, y)}. \] Suppose that $\mathrm{gcd}(x, y) = 6$. We want to use the inclusive-exclusive principal here. First we set $l = xy$. Since $\mathrm{gcd}(x, y) \% 2 = 0$, we should subtract $xy / 2$ from $l$. Since $\mathrm{gcd}(x, y) \% 3 = 0$, we should subtract $2xy / 3$ from $l$, too. Since $\mathrm{gcd}(x, y) \% 6 = 0$, however, should we $xy / 6$ from $l$? It’s not true. We have already had $l = xy - xy / 2 - 2xy / 3 = -xy / 6$. So we should add $xy / 3$ to $l$ to have $l = xy/6$.

Solution

Therefore, first we suppose that there exist a good coefficient $C[d]$. Let $C[d]$ be so that for any $g \in \mathbb{N} _ +$, it holds that

\[

\sum _ {d | g} C[d] = \frac{1}{g}.

\]

Then, we have

\[

\begin{align}

X & = \sum _ {i, j \in N} \mathrm{lcm}(A _ i, A _ j) \\

& = \sum _ {i, j \in N} \frac{A _ i A _ j}{\mathrm{gcd}(A _ i, A _ j)} \\

& = \sum _ {i, j \in N} \left( \sum _ {d | \mathrm{gcd}(A _ i, A _ j)} C[d] \right) A _ i A _ j \\

& = \sum _ {d} C[d] \sum _ {d | A _ i, d | A _ j} A _ i A _ j \\

& = \sum _ {d} C[d] \left( \sum _ {d | A _ i} A _ i \right) ^ 2.

\end{align}

\]

This is computable in time. Let $M = \max _ i A _ i$. Each sum of $A _ i$ can be calculated in $O(M / d)$-time. So the time complexity is $O(M \log M)$.

The remaining here is how to compute $C[d]$. But this is not difficult. We can just use the following equation. \[ C[g] = \frac{1}{g} - \sum _ {d | g, d < g} C[d]. \] We work on it like the sieve of Eratosthenes. First we initialize $C[i] = 1 / i$. Then, we execute the following code.

for (auto i = 1; i < M; i++)

{

for (auto j = 2 * i; j < M; j += i)

{

C[j] -= C[i];

}

}

The time complexity is $O(M \log M)$. Thus we obtain the answer as follows. \[ ans = \frac{1}{2} \left( X - \sum _ {i \in N} A _ i \right). \] The total time complexity is $O(N + M \log M)$.

D - Unique Path

Solution

If it is a tree

Suppose that $M = N - 1$. In this case the graph $G$ must be a graph. Thus for all $i \in Q$, $A _ i$ must be $0$ and the answer is Yes if and only if $A _ i = 0$ for all $i \in Q$.

Otherwise

We have at least one loop. We consider the queries $\{ (A _ i, B _ i, C _ i) \}$ as follows.

- If $A _ i = 0$, $B _ i$ and $C _ i$ are on the same “sub-tree”. We call this type of a subtree a unit. We consider as if $B _ i$ and $C _ i$ are the same one vertex. We consider this type of queries first. We use a union-find tree.

- If $A _ i = 1$, $B _ i$ and $C _ i$ must not be in the same unit. So we check this this type of queries after the above. If we find an error here, halt with flushing

No.

Let $K$ be the number of the units constructed the above things. Let $X$ be the sum number of the edges inside of each unit. $X$ does not depend on its topological shape of each unit because it is just a tree on each unit.

How can we adjust the number of the resulting edges equal to $M$? The minimum is $X + K$. Note that it must be equal to $N$ since the resulting graph will be a pseudo-tree. The maximum is $X + K(K - 1) / 2$. We can connect every two units arbitrarily but we cannot add more since if we added more edge, it would break the rule of unit. So we check the following inequality.

\[

X + K \leq M \leq X + \frac{K(K - 1)}{2}.

\]

If it holds, the answer is Yes; otherwise, No.

E - Gachapon

Basic ideas

I think that in this problem it is difficult to understand the initial step. So let’s see some fundamental examples.

The easiest example

Suppose that you play Fate/Grand Order and you want to summon Astolfo (Saber) and you want his Noble Phantasm to be level 5, as just I did yesterday (I am writing it on 2019-11-29). How many times will you need to summon in terms of the expected time?

According to the official document, the probability to summon him is $p = 0.008$ each time and you need to summon him $B = 5$ times to get NP5. Of course we can easily calculate the expected time. It is $(1/p) \times B$. We see the details carefully. What happens here?

What you can do is to continue to summon until you reach NP5. At first, you get no Astolfo, i.e. NP0. You start to summon, and get him. It’s NP1. You continue to summon, and get him to obtain NP2. You continue to summon until you obtain NP5. Here, we see the fundamental idea: To get him $B$ times, you have to spend each “NP $i$ state” to get NP $(i + 1)$ for $i = 0, 1, \dots, B - 1$, and the expected time will be their sum.

In this case, for each state, the expected time is the same: $1 / p$. So the answer is $B / p$.

Second simplest example

We think of the case $N = 2$, $B _ 0 = B _ 1 = 1$. Let’s suppose that you summon Astolfo (Rider) and Jeanne (Ruler) to NP1, i.e. $p _ 0 = 0.015$ and $p _ 1 = 0.008$.

You start by $(0, 0)$. Here, we have two probabilities: who will come firstly, Astolfo or Jeanne. If we have Astolfo first, it’s $(1, 0)$. But you have to continue until you succeed to summon Jeanne. This is an important point that was hidden in the previous example. If you are so lucky to get Astolfo NP5, it doesn’t mean the summon is over, i.e., $(5, 0)$ is not the terminated point. You have to summon Jeanne.

In this point, what will be the expected time? As a lesson from the previous example, the expected time will be the sum of the expected time when you are not satisfied. In this case it will be the sum of $(0, * )$ and $( *, 0)$, but be careful of the case $(0, 0)$. This is included in both of them. So we subtract it from the answer. We will use the inclusive-exclusive principle.

We can calculate the answer as follows.

- In the case $(0, * )$, the expected time will be $1 / p _ 0$. We add it to the answer.

- In the case $( * , 0)$, the expected time will be $1 / p _ 1$. We add it to the answer.

- In the case $(0, 0)$, the expected time will be $1 / (p _ 0 + p _ 1 )$. We subtract it from the answer.

The answer is \[ \frac{1}{p _ 0} + \frac{1}{p _ 1} - \frac{1}{p _ 0 + p _ 1}. \]

We will work the same thing in more general way.

Solution

Let $p _ i = A _ i / S$. We start by $(0, \dots, 0)$. We continue to summon until we reach the state $(C _ 0, \dots, C _ {N - 1})$ that satisfies $C _ i \geq B _ i$ for all the $i \in N$. The answer is the sum of all “unsatisfactory” states’ expected time. Here we use the fact that the expected time of the sum is equal to the sum of the expected time of each.

We consider the state $(C _ 0, \dots, C _ {k - 1}, C _ k, \dots, C _ {N - 1})$ that satisfies $C _ 0 < B _ 0$, …, $C _ {k - 1} < B _ {k - 1}$. What is the expected time during which this state continues?

First, we consider the coefficient for the inclusive-exclusive principle. We should $+$ when $k = 1$, that is, $(- 1) ^ {k + 1}$.

Second, we consider the probability in that this state happens. Just “ignoring” the probability for $i \geq k$, we obtain it as follows. \[ \frac{ \left( \sum _ {i \in k} c _ i \right)!}{c _ 0 ! \dots c _ {k - 1}!} \cdot \frac{p _ 0 ^ {c _ 0} \dots p _ {k - 1} ^ {c _ {k - 1}}}{ \left( \sum _ {i \in k} p _ i \right) ^ {\sum _ {i \in k} c _ i}}. \]

Finally, we estimate the expected time to last this state. Once it happens, it will continue until we summon $i < k$. So it is $1 / \sum _ {i \in k} p _ i$.

Therefore, we have the following result. \[ (- 1) ^ {k + 1} \frac{ \left( \sum _ {i \in k} c _ i \right)!}{c _ 0 ! \dots c _ {k - 1}!} \cdot \frac{p _ 0 ^ {c _ 0} \dots p _ {k - 1} ^ {c _ {k - 1}}}{ \left( \sum _ {i \in k} p _ i \right) ^ {\sum _ {i \in k} c _ i}} \cdot \frac{1}{\sum _ {i \in k} p _ i}. \tag{E.1} \]

Convert it into DP

We have to add up all (E.1) for all $2 ^ N B _ 0 \dots B _ {N - 1}$ cases. Let’s convert it into DP. Seeing (E.1), once we keep the $\sum c _ i$ and $\sum p _ i$ as the keys of DP, the transition is the same, i.e. to multiply \[ (- 1) \frac{p _ k ^ c}{c!}. \] This fact solves the problem.

Definition

$dp[k][X][Y] = $ the sum of \[ (- 1)^{s + 1} \frac{p _ x ^ {c _ x} \dots p _ {y} ^ {c _ {y}}}{c _ x ! \dots c _ {y}!} \] part of (E.1) with $X = \sum c _ i$ and $Y = \sum p _ i$, considering $i = 0, \dots, k - 1$.

We hold $dp$ by vector<vector<map<mint, mint>>>.

Answer

The answer is \[ \sum _ {X} \sum _ {Y} dp[N][X][Y] \frac{X!}{Y ^ {X + 1}}. \]

Initial state

As we argued before, we have to add when the element size is $1$. So the initial state is

\[

dp[0][0][0] = -1.

\]

Since we have the last key as the key of map<mint, mint>, we initialize the size of $dp$ is $N + 1$. Let $M$ be the sum of $\{ A _ i \}$ $+1$. We initialize the size of $dp[0]$ as $M$.

Transition

For $k = 0, \dots, N - 1$, first we copy the vector: $dp[k + 1] \gets dp[k]$. This means that we don’t choose $k$-th element. Then, for all $X$, for all $Y$, for all $0 \leq c < B[k]$, we execute the following manipulation.

\[

dp[k + 1][X + c][Y + p _ k] \mathbin{ {-} {=} } \frac{p _ k ^ c}{c!} dp[k][X][Y].

\]

This means that we choose $k$-th element for binding $c$ items.

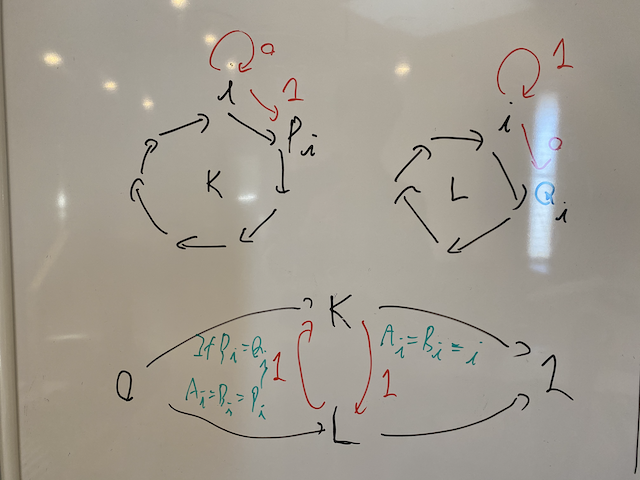

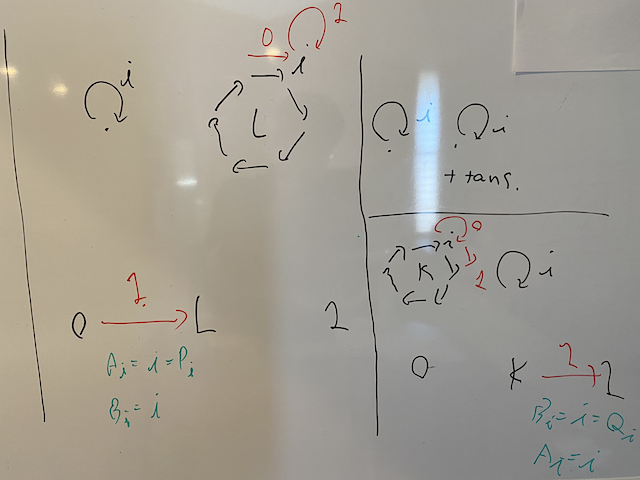

F - Two Permutations

Solution

For $P, Q \in \mathfrak{S} _ N$, we discompose each of them into a set of cycles. Fix $i \in N$. Let $K$, $L$ be the cycle including $i$ on $P$, $Q$ respectively.

Main cases

To simplify the problem at first, we consider the case $i \neq P _ i$ and $i \neq Q _ i$. Then choosing $A _ i = i$ forces us to choose $A _ j = j$ for all elements on the cycle $K$. Otherwise, i.e., choosing $A _ i = P _ i$ means that we have to choose $A _ j = P _ j$ on $K$. So, there are exactly two states on $K$; “stable” or “advanced”.

The answer is $N - penalty$, where $penalty$ is the number of the elements $i$ so that $A _ i = B _ i$. When does $i$ cause a penalty? Of course if we choose “stable” both on $K$ and on $L$, it causes that $A _ i = B _ i = i$, which means a penalty. In addition, if $P _ i = Q _ i$ and we choose “advanced” both on $K$ and on $L$, it causes a penalty with $A _ i = B _ i = P _ i (= Q _ i)$.

As we argued before, there are two states for each cycle. So we want to solve this problem by minimum cut on the directed graph. On $K$, we use the node $0$ to denote “stable” and $1$ “advanced”. In addition, we use those two node conversely on $L$: The node $1$ means “stable” and the node $0$ means “advanced”. This is the key of this problem. Thus we can say as follows.

- For each $i \in N$ with $i$ not an isolated point either on $K$ or on $L$,

- If we connect $K$ with $0$ and $L$ with $1$, we have a penalty.

- Provided $P _ i = Q _ i$, if we connect $K$ with $1$ and $L$ with $0$, we have a penalty.

Other cases

Other cases are rather simple.

- Suppose $i \in N$ is an isolated point both on $K$ and on $L$, we have a penalty.

- Suppose $i \in N$ is not an isolated point on $L$ but on $K$, if we connect $L$ with $0$, we have a penalty.

- Suppose $i \in N$ is not an isolated point on $K$ but on $L$, if we connect $K$ with $1$, we have a penalty.

Memo on minimum cut

To solve the problem by minimum cut, how to convert constraints into edges is critical. To solve this problem, I felt difficulties on this point. So I write here a memo.

We consider the directed graph on $S$ to $T$. We use those characters S and T to denote the states of $S$ and $T$. Tips here are to consider the state the cut itself is done.

Simple constraints

Consider a node $A$.

- The state “if $A$ is

S, we have a penalty $x$” is presented as an edge $e = (A, T, x)$.- This is because to make cut with $A$ being the side $S$, we have to eliminate $e$; otherwise there would be a path $S \to A \to T$, which would not be a cut.

- The state “if $A$ is

T, we have a penalty $x$” is presented as an edge $e = (S, A, x)$.

Complicated constraints

Consider two nodes $A$ and $B$.

- The state “if $A$ is

Sand $B$ isT, we have a penalty $x$” is presented as an edge $e = (A, B, x)$.- Suppose we have made a cut. There are four possibilities. If both $A$ and $B$ is

S, we don’t have to eliminate $e$ to make a minimum cut since it is meaningless. The case that both $A$ and $B$ isTis the same. If $A$ isTand $B$ isS, we don’t have to eliminate $e$ to make a minimum cut since $e$ is just a reversely directed edge. So eliminating $e$ means that the cut is such that $A$ isSand $B$ isT. On the contrary, suppose we have made such a cut that $A$ isSand $B$ isT. Then, we must have eliminated $e$; otherwise we would have a path $S \to A \to ^ e B \to T$, which would not be a cut. Therefore we eliminate $e$ if and only if we make a cut with $A$ beingSand $B$ beingT.

- Suppose we have made a cut. There are four possibilities. If both $A$ and $B$ is

- The state “it is impossible that $A$ is

Sand $B$ isT” is presented as an edge $e = (A, B, \infty)$.